When a government directive carries the force of law and a fifteen-day comment window counts as consultation, the question is not whether platforms comply — it is who decides what compliance means.

In the two decades I have spent working in and around civil society organisations in India, the character of state surveillance has shifted in one consistent direction: it has become cheaper, faster, and less visible. The police vehicle parked outside a union meeting was obvious. The blocked YouTube channel leaves no physical trace. I have watched organisations I know reduce their digital footprint not because they were instructed to, but because the threat had become ambient enough that caution became the default. Lawyers call this a chilling effect. A development economist might call it an information externality: the compliance cost is borne by people who never received the original order.

On March 30, 2026, the Ministry of Electronics and Information Technology published the draft Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Second Amendment Rules, 2026, with a public comment window of fifteen days closing on April 14. The official communication from Secretary S. Krishnan describes the amendments as "clarificatory and procedural." The analysis here suggests otherwise. Taken together, four provisions would make government advisories legally binding on all internet platforms as a condition of safe harbour immunity, bring every user posting news or current affairs content under Ministry of Information and Broadcasting oversight, and grant an Inter-Departmental Committee jurisdiction to investigate matters the government refers to it without any public complaint being filed. The consultation process that preceded this ran for approximately fifty minutes at a stakeholder meeting on April 7, attended, according to The Federal, by three to four civil society organisations.

This essay is about what these amendments reveal about the Indian state's relationship with information. The specific provisions matter and will be examined closely here, but the deeper question is what regulatory architecture they are building and whose interests it serves. The answer, on the available evidence, is that the architecture primarily serves the state's interest in controlling the informational environment and, secondarily, the interest of incumbent global platforms in maintaining regulatory barriers that smaller competitors cannot afford to clear. The costs fall, as they consistently do in Indian policy design, on the organisations and individuals least positioned to absorb them: independent journalists, civil society bodies, Dalit and minority content creators, rural users dependent on digital platforms for livelihood information, and the small Indian technology firms that cannot staff permanent legal compliance departments.

That this is a development economics and political economy question, and not merely a constitutional one, is the argument this essay makes. India now has 958 million active internet users, with rural adoption growing nearly four times faster than in urban areas. For a substantial portion of this population, the platforms these rules govern are not optional conveniences. They are the infrastructure through which people access health information, report labour rights violations, receive agricultural prices, organise collective action, and earn income. Regulating them through an architecture that makes the government the sole arbiter of permissible speech, with no independent oversight and no user appeal rights, is a choice with distributional consequences that deserve examination.

The four amendments and what they change

The draft rules amend the IT (Intermediary Guidelines and Digital Media Ethics Code) Rules, 2021, issued under Section 87 of the Information Technology Act, 2000. The most consequential of the four proposed changes is the entirely new Rule 3(4).

This sub-rule requires platforms to "comply with and give effect to any clarification, advisory, order, direction, standard operating procedure, code of practice or guideline issued by the ministry, by order in writing." The critical mechanism is the enforcement trigger: compliance is made a condition of "due diligence obligations of the intermediary under section 79 of the Act." Section 79 is the safe harbour provision, the legal protection that shields platforms from liability for user-generated content. Without it, a platform hosting a defamatory video, an incitement to violence, or a copyright-infringing file is directly liable as if it had published that content itself.

The Internet Freedom Foundation's initial analysis observed that the amendment attempts to "confer binding legal force" on executive instruments that bypass both parliamentary scrutiny and the established rulemaking process. Rudraksh Lakra, writing for the Indian Constitutional Law and Philosophy Blog, called the provision a departure from the constitutional framework because it requires compliance with documents — advisories, SOPs, guidelines — that carry no gazette notification, no parliamentary laying, and no obligation of prior public consultation. They can be issued informally, without notice, and take effect immediately.

Rule 8(1)'s proposed proviso extends Part III of the IT Rules, the digital media ethics code previously applicable to registered news publishers and OTT platforms, to cover "news and current affairs content hosted, displayed, uploaded, modified, published, transmitted, stored, updated or shared on the computer resources of the intermediaries by users who are not publishers."

This sweeps in YouTubers, Instagram creators, citizen journalists, political commentators, and any ordinary user posting about public affairs, placing them under the Ministry of Information and Broadcasting's regulatory machinery. Open Magazine's analysis noted that this effectively ends the distinction between publishers and distributors that has structured internet law since the safe harbour framework was established.

The Rule 14 amendment expands the Inter-Departmental Committee's jurisdiction beyond hearing complaints about Code of Ethics violations to cover "matters" referred by the Ministry on its own initiative, with no requirement that such matters arise from a complaint, relate to a specific Code violation, or that affected parties receive prior notice of a referral. This transforms the IDC from a grievance-resolution mechanism into a proactive content-surveillance instrument.

Rules 3(1)(g) and 3(1)(h), the most technically opaque of the four changes, insert carve-outs to platform data deletion obligations, clarifying that these do not override retention requirements under other laws. SFLC.in's initial statement flagged that this could subordinate the right to erasure under the Digital Personal Data Protection Act 2023 to open-ended government data demands, depending on which "other laws" are eventually invoked.

These four changes arrive as the second IT Rules amendment of 2026. The first, notified on February 10, addressed deepfakes and AI-generated content, reducing takedown timelines for unlawful content from 36 hours to 3 hours. Together, the two 2026 amendments shift the regulatory architecture in three directions: faster content removal, a broader scope of coverage, and binding government directives enforced through safe-harbour conditionality.

Delegated legislation and the bypass of parliamentary scrutiny

The IT Act 2000 was enacted to facilitate e-commerce and recognise electronic signatures. Section 87 grants the Central Government power to make rules "to carry out the provisions of this Act," with Section 87(3) requiring every rule to be "laid before each House of Parliament, while it is in session, for a total period of thirty days." This is negative laying: rules take immediate effect and remain in force unless Parliament actively objects within thirty days. In practice, Parliament almost never objects. The volume of subordinate legislation overwhelms the capacity of the Committee on Subordinate Legislation, which lacks the technical staff to meaningfully scrutinise complex digital regulation.

Through this mechanism, the government has progressively expanded the IT Act's regulatory reach far beyond its original scope. The IT Rules 2021 created an entirely new three-tier regulatory structure for digital news media and OTT platforms, entities that the parent Act neither defines nor regulates. Part III introduced content codes, oversight mechanisms, and the Inter-Departmental Committee, none of which have a statutory basis in the IT Act's text. The rules were amended in 2022, 2023, 2025, and twice in 2026. No parliamentary committee has conducted hearings on any of these amendments. The Digital Personal Data Protection Act itself was debated for 52 minutes in the Lok Sabha before it was passed.

Lakra's IndConLawPhil analysis frames this pattern as "digital structural authoritarianism," a governance mode in which the executive builds legal infrastructure for control through the interstices of administrative law, avoiding the deliberative friction that parliamentary legislation requires. This is not unique to the digital domain. The pattern appears in the government's use of FCRA amendments to restrict civil society funding, which I examined in detail in The Unaccountable State, and in the conversion of independent research and oversight institutions into nominally independent bodies that, in practice, cannot act against the executive's preferences. What distinguishes the digital context is the speed at which the architecture is being built and the scale of its potential reach.

The proposed Rule 3(4) represents the apex of this trajectory. Executive advisories, guidelines, and SOPs, instruments that require no parliamentary laying, no advance notification, no public comment period, and no independent review, would become legally enforceable conditions of safe harbour. The Future of Privacy Forum's analysis of the 2021 rules identified that version as already "expanding the boundaries of Executive power in digital regulation" beyond what the parent statute contemplated. The 2026 draft goes further by collapsing the distinction between legislation, rules, and informal executive instruments: all three are now, for practical purposes, enforceable as conditions of doing business in India's internet market.

A Verfassungsblog analysis by Rahul Matthan argued that the DPDPA's delegation provisions "fly in the face of the Indian Supreme Court's delegation doctrine" because they provide "no coherently determinable legislative policy" from which the executive's rule-making power can be derived. The proposed Rule 3(4) raises the identical objection: what limits, other than the Ministry's own judgment, constrain which advisories it issues and what they require? The answer in the draft text is: none that are judicially reviewable in advance.

The Fact Check Unit, the courts, and a second attempt

The proposed Rule 3(4) did not emerge from a vacuum. Its most instructive antecedent is the government's attempt, in April 2023, to create a government-designated Fact Check Unit empowered to mark content about "business of the Central Government" as "fake, false, or misleading," with platforms required to act on such designations or lose safe harbour. The provision contained no definition of "fake," "false," "misleading," or "business of the Central Government." It provided no appeal mechanism for users or publishers.

The Bombay High Court struck down the provision in a split verdict, resolved by a third judge's concurrence. Justice G.S. Patel, writing the principal opinion in Kunal Kamra v. Union of India, found that "anything might be the business of government" and the terms were vague to the point of constituting "actual censorship of user content." Justice A.S. Chandurkar's tie-breaking concurrence held that the amendment violated Articles 14, 19(1)(a), and 19(1)(g) of the Constitution, failed the proportionality test established in Gujarat Mazdoor Sabha, and had not been properly laid before Parliament as Section 87(3) requires. When the government designated the Press Information Bureau as the FCU in March 2024, the Supreme Court promptly stayed that notification. As of March 10, 2026, the Court again refused to stay the Bombay High Court's order while admitting the government's Special Leave Petition. The FCU remains struck down.

The 2026 draft amendments do not revive the FCU by name. What IFF, SFLC.in, and Lakra's analysis observe is that they achieve functionally comparable outcomes through a different mechanism. A MeitY advisory can now require platforms to treat specific content as violating a "standard operating procedure" without any court order. The IDC can now examine any "matter" referred by the Ministry without a complaint being filed. The scope, having previously been limited to content about the central government, now extends to all "news and current affairs" posted by any user. Each element of the FCU mechanism that courts found constitutionally problematic — vagueness of terms, government acting as sole arbiter, no independent review, no user notification — reappears in different regulatory dress.

This is the most significant political economy observation about the 2026 rules: they represent not a change of approach but an adaptation of method. When one instrument was struck down as ultra vires the parent Act, a new instrument was designed to achieve the same governance objective through different procedural channels.

Platform market concentration and the compliance moat

A recurring rhetorical feature of government communications about the IT Rules is that they target "Big Tech," the global platforms whose scale and opacity make regulation feel both urgent and righteous. The political economy of the amendments, examined more carefully, reveals a different set of beneficiaries.

The compliance infrastructure required under the IT Rules 2021, intensified by the 2026 amendments, includes Chief Compliance Officers, Nodal Officers, Grievance Officers resident in India, automated content-monitoring systems, multilingual content-review teams capable of operating within 3-hour takedown windows, and permanent legal escalation channels for government orders. Google and Meta together control 64% of India's digital advertising revenue, approximately ₹94,700 crore in 2025, and they can absorb this compliance infrastructure. Indian startups cannot.

Koo is the most instructive case study. India's Twitter alternative was among the award winners in the government's own Atmanirbhar Bharat App Innovation Challenge, raised approximately $60 million from Accel and Tiger Global, reached 60 million downloads, and fully cooperated with government takedown requests. It shut down on July 3, 2024. Analysts who reviewed potential acquisitions found interested parties "hesitant to deal with user-generated content due to inherent risks and regulatory complexities." The regulatory framework that nominally challenged foreign platform dominance in fact made Indian platform creation economically unviable.

An Oxera cross-country study found that safe harbour regimes are not merely legal protections but economic enablers: they allow new entrants to operate at lower capital costs by removing open-ended legal liability from the balance sheet. Weakening safe harbour by conditioning it on compliance with informal executive instruments changes the fundamental economics of platform entry. The study found that safe harbour erosion falls disproportionately on early-stage platforms with fewer resources to manage legal uncertainty, precisely the segment of the market that any "Digital India" strategy should be cultivating.

The April 7 stakeholder meeting illustrated this political economy directly. According to MediaNama founder and editor Nikhil Pahwa, the meeting left "key questions from platforms and civil society unresolved," and Pahwa described it as "a compliance exercise, with no intent to explain or answer questions." The Federal reported that civil society had sparse representation, with three to four organisations in attendance. Industry — the large platforms with compliance departments and government relationship managers — had a separate session. This is not incidental to the policy design. It reflects whose compliance matters and whose concerns do not.

Digital platform dependency and the economics of information access

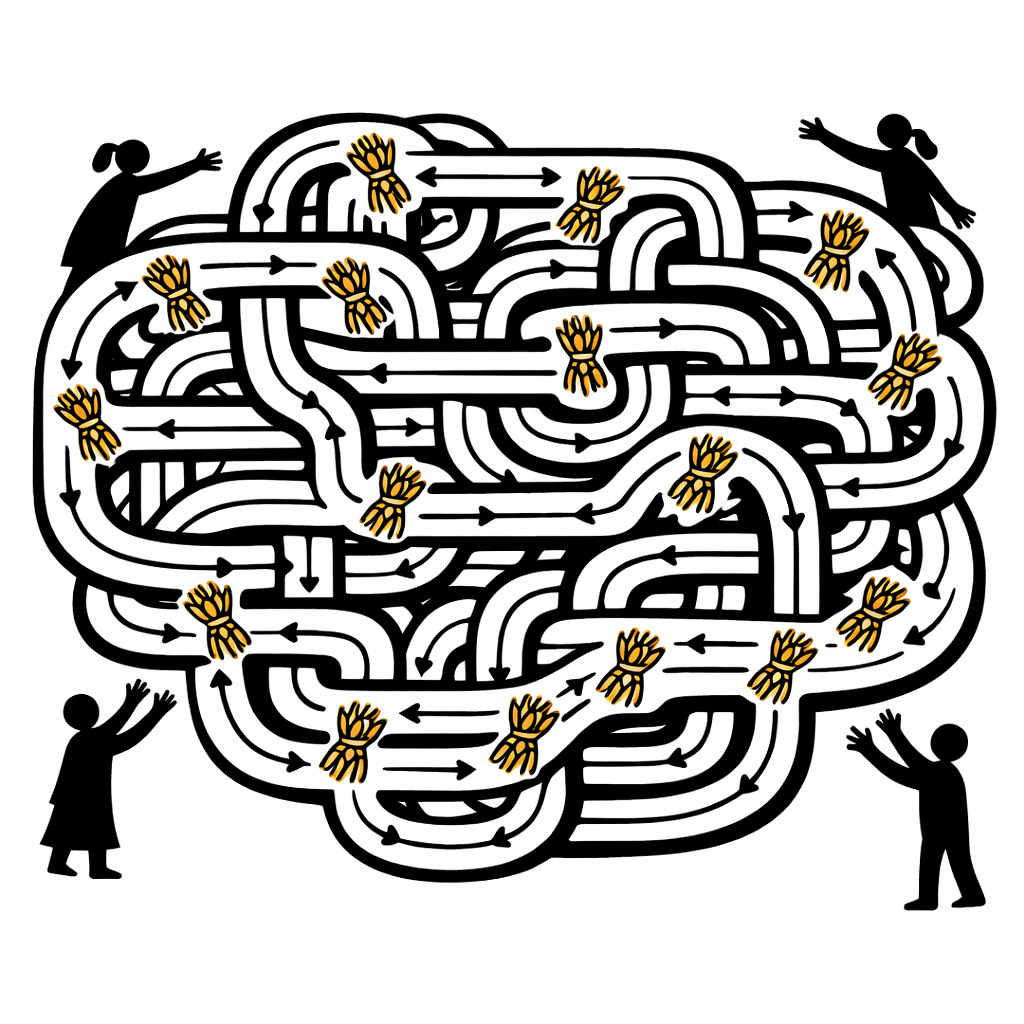

The distributional consequences of these amendments extend far beyond the question of which platforms survive. India's 958 million active internet users, a majority of them now in rural areas, have come online into an ecosystem where one messaging application and two advertising platforms constitute the effective internet for most purposes. WhatsApp is used by approximately 535 million Indians, roughly 56% of all internet users, and serves as the primary communication infrastructure for a wide range of economic and social activities. A 2024 survey of small retail businesses found 97% of MSME retailers use WhatsApp daily for inventory coordination, supplier communication, and customer orders. India has approximately 12 to 13 million kirana stores, which account for the majority of the country's fast-moving consumer goods retail. Small businesses dominate India's digital advertising landscape: 92% of Meta's Indian advertiser base comprises small and medium enterprises, and India's approximately 63 million MSMEs increasingly rely on WhatsApp Business for day-to-day commerce and customer communication.

For gig workers, an estimated 12 million people currently delivering food, driving ride-hail vehicles, and managing last-mile logistics, a number projected to reach 23.5 million by 2029-30, platform access is the mechanism through which work is allocated, income is received, and grievances nominally filed. The IT Rules contain no provisions governing platform accountability to workers. The 2026 amendments do not change this. The accountability architecture runs exclusively from platforms to the government.

The consequences of information asymmetry are equally significant. Research by Dr. Pardeep Attri at the University of Bath, published in February 2026, documents a "triple exclusion" facing India's approximately 200 million Dalits online: algorithmic invisibility, inadequate protections against harassment, and suppression through coordinated trolling. Equality Labs found that 13% of hate content on Facebook India involves caste-based hate speech, yet Facebook's own internal documents indicate more than 95% of such content remains on the platform. A joint Global Witness and Internet Freedom Foundation investigation found that YouTube removed none of the 79 reported videos containing hate speech targeting women, Muslims, and Dalits. Content moderation systems remain overwhelmingly English-focused despite approximately 68% of Indian users consuming content in non-English languages, a figure that rises further as new users from rural areas come online.

The practical picture is this: existing content moderation fails the users most harmed by hate speech while disproportionately targeting political dissent and criticism of the government. The 2026 amendments create stronger incentives for over-censorship on political content, because that is where government advisories will focus, and introduce no new obligations to address the caste-based and religious hate speech that platforms routinely decline to remove. A regulatory framework that tightens pressure on political speech while leaving structural hate speech unaddressed is not a neutral posture. It reflects a set of governance priorities that development economists should name as such.

The internet shutdown dimension compounds this picture. India has executed more than 800 internet shutdowns since 2012, the most of any country globally. Access Now recorded 84 shutdowns in 2024 alone. The Kashmir shutdown that began in August 2019 and lasted 552 days caused an estimated ₹178.78 billion in direct economic losses in the first five months alone, with over 500,000 job losses. Human Rights Watch's 2023 investigation documented that gig workers, small traders, and daily wage labourers suffered most acutely during shutdowns, precisely the population the "Digital India" programme claims as its primary beneficiary constituency. Access Now's Namrata Maheshwari observed: "Internet shutdowns contradict India's ambitions of global leadership in AI, digital governance, and skilling."

The censorship record and what official requests look like in practice

MeitY Secretary S. Krishnan, at the April 7 stakeholder meeting, dismissed concerns about suppression of dissent as "a myth," describing government actions as remaining "within the bounds of existing legal and constitutional provisions." MediaNama's censorship tracker documented 40-plus instances of geo-blocking, account withholding, and content takedowns in March 2026 alone, including accounts belonging to cartoonist Satish Acharya (for cartoons criticising the Prime Minister's response to West Asia policy), Ambedkarite journalist Sandeep Singh, and users whose posts used phrases deemed insufficiently respectful toward elected officials.

In July 2025, India ordered X to block 2,355 accounts, including those of Reuters, Pakistani journalists, and Indian independent outlets. X described the orders as "press censorship," a characterisation that prompted no public response from MEITY. Between March 2024 and June 2025, The Wire reported that X received approximately 1,132 government takedown requests, with 70% originating from the Ministry of Home Affairs. The MHA's Indian Cyber Crime Coordination Centre, which operates the Sahyog portal that SFLC.in documented in detail, blocked 1,11,185 items between 2024 and 2025, approximately 290 takedown notices per day. At least 72 companies, including Meta, Google, and Apple, have been onboarded to Sahyog. X challenged the portal before the Karnataka High Court in March 2025.

Alt News' analysis of government orders on X found that Section 69A, the provision empowering blocking orders, was being applied to cartoons and satirical content that courts have previously held is protected speech. Newslaundry documented 55,607 URLs blocked between 2015 and 2022, of which 47.6% were blocked under Section 69A. Rule 16 of the Blocking Rules keeps all Section 69A orders strictly confidential: users are typically not informed which authority blocked content or on what grounds, making legal challenge structurally close to impossible. India topped global government requests for Meta user data in both H1 and H2 2024, surpassing the United States. YouTube removed 2.25 million videos from India in the final quarter of 2023, representing approximately 25% of global removals for that period, the highest of any country. The US Trade Representative's 2026 country report noted that India's compliance deadlines are "impractical" and flagged an "increased quantity of takedown requests as being politically motivated."

The opacity of the current consultation mirrors the opacity of the censorship infrastructure itself. In the October 2025 draft deepfake rules, MeitY explicitly told submitters that comments "shall not be disclosed to anyone at any stage." MediaNama filed an RTI in March 2026 seeking the release of those submissions; a response is pending. Raman Chima of Access Now, speaking after the April 7 meeting, observed: "In the last consultation, they added a whole new takedown provision which had not been consulted upon."

The courts and the limits of judicial protection

At least 17 petitions challenging the IT Rules 2021 were filed across multiple High Courts. The Supreme Court consolidated them and transferred all to the Delhi High Court, where a Division Bench has been hearing arguments since late 2024. The lead petitions include the Foundation for Independent Journalism (The Wire) v. Union of India, challenging Part III as creating censorship mechanisms without statutory basis; LiveLaw Media v. Union of India, arguing the three-tier grievance structure makes the executive both complainant and adjudicator; and WhatsApp LLC v. Union of India, challenging Rule 4(2)'s traceability mandate as incompatible with end-to-end encryption and violating the proportionality test from K.S. Puttaswamy v. Union of India.

Separately, three petitions before the Supreme Court challenge the Digital Personal Data Protection Act 2023 and its 2025 Rules. Filed by RTI activist Venkatesh Nayak, The Reporters Collective, and the National Campaign for Peoples' Right to Information, they target Section 44(3) of the DPDPA, which amends the RTI Act to remove the public interest override for disclosure of personal information about public functionaries, effectively removing a key transparency provision. The Supreme Court Observer notes that a bench headed by CJI Surya Kant issued notice and referred core questions to a larger bench in February 2026.

Interim protections from multiple High Courts remain in force: stays on Rules 9(1) and 9(3) from the Bombay and Madras High Courts, and the Kerala High Court's 2021 stay on Part III coercive action following LiveLaw's petition. These are partial and procedural; they do not resolve the foundational constitutional questions, and they coexist with ongoing government enforcement of the rules against which they offer only qualified protection. The Bombay High Court's FCU ruling demonstrates that litigation can constrain executive overreach, but the government has responded by enacting new rules that seek to achieve functionally comparable outcomes through alternative procedural mechanisms. The pattern is a government that treats judicial decisions as procedural obstacles to be worked around rather than as substantive constraints on its authority.

What a different regulatory architecture would look like

The comparison with the European Union's Digital Services Act is useful not as a template to be transplanted but as a demonstration that the direction of accountability in digital regulation is a political choice, not a technical inevitability. Under the DSA, platforms are primarily accountable to users and independent regulatory bodies: they must conduct systemic risk assessments, submit to independent audits, notify users of content removal with specific reasons, and provide internal appeals and out-of-court dispute mechanisms. The European Commission oversees Very Large Online Platforms directly; each member state designates an independent Digital Services Coordinator institutionally separate from the government ministries that set digital policy. India's IT Rules framework inverts this: platforms are accountable to the government, there is no independent regulator, and content moderation is driven by government directives rather than structured risk assessment.

Translating a different approach into India's political context requires specificity about what changes are feasible and what their distributional effects would be.

Safe harbour under Section 79 should be conditioned on platforms following court orders and responding to judicial process, not on compliance with informal executive advisories. This distinction exists in the original IT Act. The 2026 amendments erase it. Restoring it does not require new legislation, only a withdrawal of Rule 3(4). The government's argument that informal advisories are needed for speed is undercut by the existing framework under Section 69A, which already allows emergency blocking orders with a 24-hour turnaround: the question is not speed but accountability.

Section 69A blocking orders should require disclosure to affected parties within a defined period and include a mechanism for challenge before an independent body. The current combination of indefinite secrecy under Rule 16 of the Blocking Rules and the absence of an accessible right of appeal is incompatible with the proportionality requirements that Puttaswamy established as constitutional baselines for restrictions on fundamental rights. The government's own rationale for blocking orders, national security and public order, has well-established judicial review frameworks. There is no principled reason digital speech restrictions should be exempt from them.

Content moderation obligations need to address the harms actually documented at scale: caste hate speech, religious targeting, and gender-based harassment. These categories disproportionately affect Dalit, Muslim, and women users, and the existing rules contain no specific obligations regarding them. The 2026 amendments, which focus entirely on political content and "news and current affairs," do not address this dimension. A framework that tightens pressure on political speech while leaving structural hate speech unaddressed is not a neutral regulatory posture.

The DPDPA's amendment to the RTI Act, Section 44(3), should be reversed as a matter of basic democratic consistency. A government that claims to be building transparent digital governance should not simultaneously be removing the provision that allows citizens to access information about the conduct of public officials. As I wrote in The Unaccountable State, the FCRA amendments and associated legislation form an architecture designed to reduce scrutiny of the government while increasing government scrutiny of civil society. Section 44(3) applies the same logic to the RTI framework.

Finally, the consultation process itself requires structural reform. A fifteen-day public comment window on amendments with constitutional implications, followed by a fifty-minute stakeholder meeting whose submissions are kept confidential, is not consultation in any meaningful sense. The Digital India Act that MEITY has been signalling for several years should, if it is eventually tabled, go through parliamentary committee hearings with public testimony. The DSA took approximately two years from proposal to adoption, with extensive debate in the European Parliament. India's amendments offer fifteen days. The difference is not speed: it is whether democratic deliberation is considered a necessary input to regulation at all.

Returning to the ambient threat

The organisation whose website I could not access in 2022 still exists. It has moved some of its content to encrypted channels, reduced its social media presence, and instructed staff not to post organisational material on personal accounts. These are rational responses to an ambient regulatory environment, and they have nothing to do with the specific provisions of any particular rule. They are the chilling effect operating as it was designed to operate.

The 2026 draft amendments do not represent a rupture with previous policy. They are a continuation of a pattern of digital governance that has been building since at least 2021: expanding executive discretion, compressing consultation timelines, and using safe harbour conditionality to discipline platforms without requiring the transparency of court orders or the accountability of parliamentary legislation. That this pattern is visible in the data, in the more than 800 documented shutdowns, the 55,607 blocked URLs, the 1,11,185 MHA takedowns in a single year, is what makes Secretary Krishnan's description of dissent concerns as "a myth" so difficult to reconcile with the documentary record.

The stakes of the April 14 comment deadline extend beyond the specific provisions under consultation. Each round of amendments that is adopted without significant modification normalises both the substance and the process: binding executive advisories as law, fifteen-day comment windows as adequate consultation, confidential submissions as democratic participation. The longer the architecture is in place, the more entrenched the institutional interests that benefit from it become.

For the 958 million Indians whose access to economic information, political speech, and social connection runs through the platforms these rules govern, the question of who those platforms are accountable to, and through what mechanisms, is not an abstract constitutional debate. It is the practical question of whose complaints are heard, whose content is removed, and whose presence on the internet is treated as a problem to be managed.

Further Reading

On the IT Rules 2021 and the 2026 amendments

IFF: Sound the Alarm — First Read on MeitY's Draft IT Rules Second Amendment, 2026

SFLC.in: Initial Statement on the Draft IT Rules Second Amendment, 2026

PRS Legislative Research: IT (Intermediary Guidelines and Digital Media Ethics Code) Rules, 2021

On the Fact Check Unit and judicial challenges

Verfassungsblog: The Bombay High Court Dismisses the Ministry of Truth

Supreme Court Observer: Challenge to the IT Rules 2023

Supreme Court Observer: Transfer of IT Rules Challenges

Verfassungsblog: Throwing the Delegation Doctrine to the Winds (on the DPDPA)

On digital censorship and information control

Alt News: How Section 69A Is Being Used to Silence Dissent

Newslaundry: India Blocked 55,607 Websites in Seven Years

Human Rights Watch: No Internet Means No Work, No Pay, No Food — Internet Shutdowns in India (2023)

SFLC.in: Content Takedown Through the Sahyog Portal

The Wire: X Asked to Take Down 1,400 Posts Between March 2024 and June 2025

On platform dependency, safe harbour economics, and marginalised communities

Dr. Pardeep Attri, University of Bath: Social Media Worsens Plight of Marginalised Communities in India (Phys.org, February 2026)

Oxera: The Economic Impact of Safe Harbours on Internet Intermediary Start-Ups

Write a comment ...